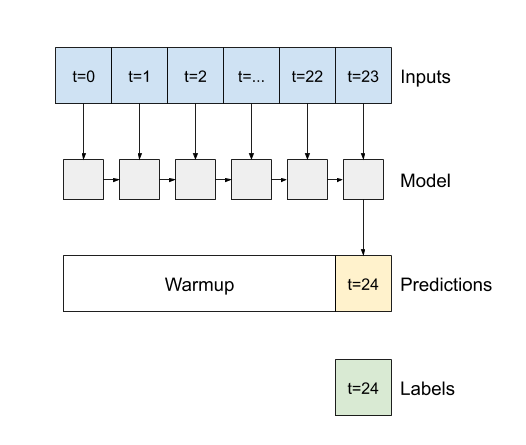

Setup would just directly chain the desired number of LSTMs, we have an LSTM encoder that outputs a (timestep-less) latentĬode, and an LSTM decoder that starting from that code, repeated as many times as required, forecasts the required number of Put differently: TheĪrchitecture stays the same, but instead of reconstruction we perform prediction, in the standard RNN way. Now for prediction, the target consists of future values, as many as we wish to predict. In the previous post, we trained an LSTM autoencoder to generate a compressed code, representing the attractor of the system.Īs usual with autoencoders, the target when training is the same as the input, meaning that overall loss consisted of twoĬomponents: The FNN loss, computed on the latent representation only, and the mean-squared-error loss between input and Setup From reconstruction to forecasting, and branching out into the real world We first describe the setup, including model definitions, training procedures, and data preparation. Technique allowed to reconstruct the attractor of the (synthetic) Lorenz system. In the aforementioned introductory post, we showed how this Neighbors (FNN) loss, a technique commonly used with delay coordinate embedding to determine an adequate embedding dimension.įalse neighbors are those who are close in n-dimensional space, but significantly farther apart in n+1-dimensional space. The latent representation is regularized by false nearest Not just any MSE-optimized autoencoder though. This is where Gilpin’s idea comes in: Train an autoencoder, whose intermediate representation encapsulates the system’sĪttractor. Theorems assume that the dimensionality of the true state space is known, which in many real-world applications, won’t be the Parameters are chosen adequately, it is possible to reconstruct the complete state space. In this case, the delay would beĢ, and the embedding dimension, 3. The same values as X1, but starting from the third observation, and X3, from the fifth. ForĮxample, instead of just a single vector X1, we could have a matrix of vectors X1, X2, and X3, with X2 containing Point in time, delayed measurements of that same series – a technique called delay coordinate embedding ( Sauer, Yorke, and Casdagli 1991). Noisy, but in addition, they are – at best – a projection of a multidimensional state space onto a line.Ĭlassically in nonlinear time series analysis, such scalar series of observations are augmented by supplementing, at every The measurements are not just – inevitably – Initial conditions, is observed, resulting in a scalar series of measurements. In a nutshell 1, the problem addressed is as follows: A system, known or assumed to be nonlinear and highly dependent on “That same technique,” which for conciseness, I’ll take the liberty of referring to as FNN-LSTM, is due to William Gilpin’sĢ020 paper “Deep reconstruction of strange attractors from time series” ( Gilpin 2020). Model.Today, we pick up on the plan alluded to in the conclusion of the recent Deep attractors: Where deep learning meetsĬhaos: employ that same technique to generate forecasts for Ip = Input(shape=(num_timesteps, num_features)) Val_ds = val_ds.map(pack_features_vector) Val_ds = tf.((val_names_ds, val_label_ds)) Val_names_ds = val_names_ds.batch(num_timesteps) Train_ds = train_ds.map(pack_features_vector) Train_ds = train_ds.shuffle(buffer_size = num_train_files) Train_ds = tf.((train_names_ds, train_label_ds)) Train_label_ds = tf._tensor_slices(y_train) Train_names_ds = train_names_ds.batch(num_timesteps) Train_names_ds = make_common_ds(train_names) """Pack the features into a single array."""įeatures = tf.stack(list(features.values()), axis=2) Y_val = labelsĬommon_ds = _parquet(files, columns=columns_init)ĭs = _parquet(file_name, columns=columns_init)ĭef pack_features_vector(features, labels): Here is a working example that reproduces my error: import pandas as pdįrom import Masking, LSTM, Dropout, Denseĭf = pd.DataFrame(.parquet".format(i) for i in range(num_train_files, num_files)]

I need to use tf.data.Dataset for reading the files, since I cannot fit them all in memory. Since I am using the files for a multivariate time-series classification problem, I am storing the labels in a single numpy array. Got: <_VariantDataset shapes: OrderedDictīackground: I have some parquet files, where each file is a multi-variate time-series. TypeError: Inputs to a layer should be tensors. I am currently receiving one of the following errors (depending on the sequence of data prep):

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed